State of the Impact Debate: Storming, Norming, Sometimes Performing

The meetings are coming fast and furious now.

In late March, BRITDOC convened an all-star room of makers, impact producers, analysts and funders for an Impact Lab to close in on strategy and evaluation standards for social-issue documentary (see our Storify coverage). This past week, the Center for Investigative Reporting hosted two separate two-day events — Dissection C, focused on film in NYC and Dissection D, focused on public media in DC. These gatherings follow on the heels of our own Media Impact Focus events at The Paley Center and the Annenberg Space for Photography, and a series of earlier closed-door sessions co-hosted by the Knight and Gates Foundations, also grappling with the complex questions of how best to assess the ways in which media engages audiences.

What’s the upshot, and what are the next steps? Here’s a brief primer.

Tools and Frameworks Beginning to Gel

One thread that unites all of these discussions is the search for shared evaluation methods. A handful of evolving tools have been a focal point, each with their own take on the challenge of how to track the complex responses of audiences across platforms over time:

- ConText: Developed by Assistant Professor Jana Deisner and her team at University of Illinois, this tool helps researchers analyze online conversations and coverage of a documentary across traditional and social media to visualize the networks of people and organizations discussing the film, and surface key themes and sentiments. The software is free to download, but requires real tech savvy and time to implement.

- ImpactSpace: Developed by the Harmony Institute, this ambitious platform is still in beta. It combines a variety of online data collection methods to reveal the impact of individual social issue documentaries, and then stack them up against other films on the same issue or a broad selection of films covering many issues. The tool draws upon the visual metaphor of a “universe” to orient users, allowing them to zoom in and out of different levels of analysis and understand how online conversations are shifting.

- The Participant Index: Jointly developed by Participant Media and the Media Impact Project at USC Annenberg’s Lear Center, this tool is also in a beta phase. Researchers are using a combination of survey data and media metrics to assess the ability of selected documentary, entertainment, TV and online productions to shift audience members’ attitudes and move them to action.

- Sparkwise: Originally developed by Wendy Levy at the Bay Area Video Coalition, it is currently managed by Tomorrow Partners. While the tools above allow users to analyze impact from the outside in, this free dashboard tool is designed to help producers and nonprofit strategists track, ignite and report engagement from the inside out. A customizable set of widgets pull and display data from commonly used social media platforms, and allow makers to develop customized trackers to drive audiences to participate or contribute. Here’s an example of how one production, The Revolutionary Optimists, is using this tool to round up data and tell their impact story.

- Structured case studies: While these tools are beginning to standardize methods and categories for impact analysis, quantitative data alone often can’t do justice to the story of social issue film campaigns that utilize a variety of online and offline strategies and develop experimental outreach platforms across emerging platforms. To capture these narratives and make them comparable, BRITDOC has developed a case study template used for the PUMA Impact Awards judging process, as well as a set of related distribution and outreach models. Many other funders, researchers and makers are also creating case studies and accompanying visual frameworks to illuminate how specific media projects meet their impact goals. See our AIM Research section and this compilation of BRITDOC Impact Lab resources for examples from public media, gaming, entertainment, storytelling for social change and more.

In addition to keeping up on the progress of these emerging tools — many of which have been developed with direct support from foundations — recent impact gatherings have also considered how producers and funders are using off-the-shelf and proprietary tools to track impact.

On one side of the equation are free or relatively cheap tools such as Google Analytics or HootSuite; on the other side are high-dollar commercial services provided by vendors such as Nielsen or Chartbeat. For many journalists, the tools designed to track long-form documentary impact don’t synch up with the daily drumbeat of coverage. Instead, they are keeping a close and often skeptical eye on how digital-first news and social issue sites such as Buzzfeed and Upworthy are optimizing content for maximum reach and spreadability.

The Nieman Journalism Lab has published several incisive pieces on these debates and related research, which we showcase in our AIM articles section along with parallel analysis from Poynter, Forbes, Wired, Fast Company and other leading bloggers and outlets.

Key Tensions

But while makers, funders and analysts are now regularly meeting up to discuss how best to gauge the impact of public interest media, the field is still very much in flux.

At the BRITDOC Impact Lab, Liz Manne of FilmAid International shared psychologist Bruce Tuckman’s model for understanding the stages of team building: forming, storming, norming, performing and adjourning/mourning.

Right now, those developing new impact models are straddling the uncomfortable gap between storming and norming. Central issues include:

- Disparate definitions: The term “impact” is still widely interpreted to mean different things by different stakeholders in this debate, ranging from a very general affirmation of access to high-quality information as a basis for democratic action to very specific attempts to move influencers to action on narrow issues.

- Clashing cultures: While funders may comfortably support work across a variety of platforms and genres, editors and producers in those fields don’t always share assumptions about what’s appropriate, relevant or possible to measure.

- Fear of formulas: Producers protest that evaluation methods based primarily in quantitative or data analysis miss the deeper nuances of culture shifting and network-building that happen over time and offline. They are justifiably concerned that cookie-cutter approaches might stifle creativity or support for shifts in strategy.

- Platform churn: The continual and rapid emergence of new digital and mobile devices, and consumers’ shifting media consumption habits makes it extremely challenging to keep track of and synthesize the varied rubrics for gathering and interpreting metrics.

- Lack of resources: Many grantees cite the absence of support earmarked for impact analysis as a barrier to this work.

So, now what?

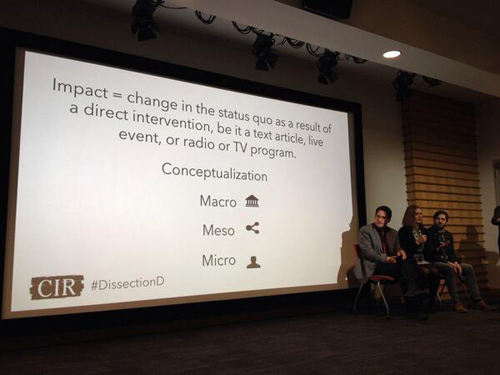

The only way out of these issues is through. While it’s possible to develop baseline best practices for evaluation, there is no single tool or framework that will save the day. Different communities of practice — organized by platform, issue or genre — will need to continue to hash out the specifics of how they are defining and tracking impact. For example, see the model for understanding collective impact on a community level, above, developed by by the St. Louis-based Nine Network of Public Media, or CIR’s working definition of impact for investigative journalism, below.

The only way out of these issues is through. While it’s possible to develop baseline best practices for evaluation, there is no single tool or framework that will save the day. Different communities of practice — organized by platform, issue or genre — will need to continue to hash out the specifics of how they are defining and tracking impact. For example, see the model for understanding collective impact on a community level, above, developed by by the St. Louis-based Nine Network of Public Media, or CIR’s working definition of impact for investigative journalism, below.

At Media Impact Funders, our goal is to serve as a resource, reporting hub and partner for this continually shifting field. For the Dissection events, we partnered with CIR to develop a bibilography that illuminates the range of debates and methods. Over the coming months, we’ll deepen our reporting on how foundations are supporting research into audience engagement, and how they’re using impact tools to inform and assess the work of their grantees. We’ll also test out various emerging approaches with the films featured in our upcoming Media Impact Festival.

At Media Impact Funders, our goal is to serve as a resource, reporting hub and partner for this continually shifting field. For the Dissection events, we partnered with CIR to develop a bibilography that illuminates the range of debates and methods. Over the coming months, we’ll deepen our reporting on how foundations are supporting research into audience engagement, and how they’re using impact tools to inform and assess the work of their grantees. We’ll also test out various emerging approaches with the films featured in our upcoming Media Impact Festival.

Sign up for our monthly AIM Bulletin to stay abreast of what we uncover.