True story: How fake news skews our impact models and what we can do about it

Up in the lofty reaches of theory, the case for the impact of news is clear: Reporters report facts in good faith, and audiences consume these stories and deliberate with others who might not share their perspectives. In the process, they’re better informed to act in their role as citizens, and a better democracy results. Down here in the trenches of 2016, though, the impact story is much messier.

Not only is trust in journalism at a record low, and misinformation rampant, turns out there’s a cadre of fake news writers making good money to actively spread disinformation. Previous concerns about “truthiness” have given way to outright panic about the Oxford Dictionaries’ word of the year: “post-truth.” Lies and facts now look the same on the social platforms and aggregation sites where many of us get our information, and recent research even suggests that well-crafted hoaxes generate more engagement.

In previous AIM Analysis posts, we’ve dug into questions around the efficacy of fact-checking, and the debate around the value of comments sections given how polarized and uncivil online discourse can be. This month, we’re zeroing in on the various proposals to stem the pernicious impact of faux journalism.

Cutting off the flow at the platform level

By far, the most visible debate about how and why fake news spreads so quickly revolves around the role that large social platforms such as Facebook, Google and Twitter play as conduits. While these platforms have in the past tried to claim that they’re just neutral pipes for content, sustained post-election pressure is beginning to move them to action.

The New York Times’ “Mediator” columnist Jim Rutenberg has been hot on the heels of this story, both rounding up outrageous fake pieces and urging Facebook to take responsibility for propagating falsehoods and propaganda. However, his observation that “The cure for fake journalism is an overwhelming dose of good journalism,” falls flat in the face of algorithms that elevate popular hoaxes among those most likely to believe them, and reward hoaxers with more ad dollars.

On the NeimanLab site, Northwestern University professor Pablo J. Boczkowski explains why he thinks traditional news is rapidly losing influence while social media is gaining it.

“[T]he commercial priorities of a company like Facebook shapes the algorithmic logic of its News Feed: The happier we are, the more likely the ads shown to us will be effective, so the algorithm prioritizes information items that are consistent with our viewpoints,” he writes.

“So even if we were presented with a large number of news stories and paid significant attention to them, the likelihood of obtaining information that exposed us to alternative viewpoints and helped us learn something new would be relatively low. This algorithmic logic further insulates people from the influence of news media stories that could potentially alter pre-existent political preferences.”

Such algorithms are difficult to decipher because they’re proprietary, observes Zeynep Tufecki, an associate professor at UNC Chapel Hill whose work focuses on the intersection of technology and social movements.

“Facebook should also allow truly independent researchers to collaborate with its data team to understand and mitigate these problems,” she writes. “A more balanced newsfeed might lead to less ‘engagement,’ but Facebook, with a market capitalization of more than $300 billion and no competitor in sight, can afford this.”

Sticky wickets: Bias, opinion, humor

Algorithms aren’t the only problem, however, when it comes to digital platforms serving up dubious news. At the end of the day, human editors are needed to determine if a story is simply slanted or outright false. Hiring such arbiters shifts the role of platforms from conduits to publishers, putting them in the position of making judgment calls that stand to anger customers.

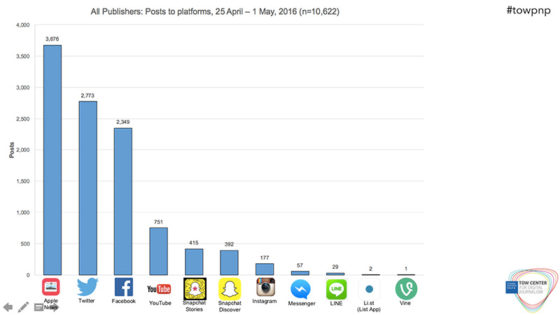

Of course, many observers say, the cat’s out of the bag—Facebook, Google and Twitter are already so closely intertwined with publishers that making such distinctions is splitting hairs. In June, Emily Bell of the Tow Center for Digital Journalism at the Columbia Journalism School released research about how platforms including Facebook, Apple, Twitter, Snapchat and Google are “shaping every aspect of news production, distribution, and monetization.”

If digital platforms are serving as the de facto public square, deciding which outlets will be seen by users when and where, then it’s clear why they’re being asked to make some tough calls about what constitutes legitimate news. The difficulty of this task, however, was highlighted in recent efforts to create definitive lists of “fake news” sites.

First, Melissa Zimdars, an assistant professor at Merrimack College, put together a list of news sites that she deemed misleading due to their habit of “using distorted headlines and decontextualized or dubious information.” Seems straightforward, but as it turns out, the list contained not just hoax sources but satirical sites such as The Onion. Conservative sites also protested their inclusion on the list. Even actual fake news writers are crying foul, claiming that their work is a form of satire. Zimdars has since removed the list to update, categorize and permanently archive it, based on the suggestions of many respondents.

Digital platforms seeking to filter out fake stories will face similar contested decisions on a daily basis. As a result, neither purely human or purely automated filtering strategies will work.

Instead, as CUNY journalism professor Jeff Jarvis and Betaworks CEO John Borthwick suggest, a mix of approaches will be needed:

- Mechanisms for users to report false news;

- Tools for attaching fact-checks to stories;

- Verification standards for news sources;

- Support for both reference sites that debunk toxic memes and white-hat hackers that build automated solutions;

- Better methods for disseminating corrections; and

- More collaboration among platforms, publications and universities to tackle this multifaceted problem.

They invite others to contribute their own solutions.

Training news audiences to spot nonsense

Of course, only so much can be done to fix the problem on the supply side. At the end of the day, the problem lies on the demand side, among credulous news consumers who are emotionally primed to believe the worst about politicians and the powerful.

“Sorry, but I don’t want Facebook to be the arbiter of what’s true,” writes Arizona State University professor and author Dan Gillmor. “Nor do I want Google — or Twitter or any other hyper-centralized technology platform — to be the arbiter of what’s true.”

Instead, he argues, the platforms should help users learn how to read skeptically, seek out multiple perspectives, and create their own media. Primers such as this “How to Spot Fake News” piece from FactCheck.org are a useful start.

This goes not just for the untrained, but for those of us who make a living thinking about, funding and making media. Platforms and user habits are changing faster than we can track, and our own theories of media impact can quickly become outdated. It’s only by consuming recent case studies—for example, see this dissection of how one fake news story went viral—and checking our own biases with the help of tools such as this Political Media Barometer that we can encounter the edges of our own false assumptions.

Fake news isn’t the only problem that journalists and democracy are facing—as Joshua Benton observes: “The forces that drove this election’s media failure are likely to get worse.” But without verifiable facts, any impact theory for journalism is doomed to fail. So, it’s a clear place to start rebuilding.

Media Impact Funders research consultant Katie Donnelly contributed to this article.